Image: Black Mirror (Season 6)

Why does this matter?

Because, in practice, it affects how you research, write, make decisions, and consume information every single day.

The article shows that AI is reshaping perception, work, and decision-making at scale, while society still understands very little about data collection, the lack of transparency among Big Tech companies, and the concentration of power behind this mediation.

Public discussion about artificial intelligence still tends to lag behind what is already happening in practice. While most people still see AI as a productivity tool, a text assistant, or an impressive novelty, the real transformation is taking place at a much deeper level: mass data collection, integration with real systems, automation of mental processes, the growing mediation of language, and the concentration of power within private infrastructures. The problem no longer lies only in what these technologies do in front of the user, but in how they silently reorganize the relationship between people, information, behavior, decision-making, and reality.

This advance does not occur in an environment of full collective awareness. On the contrary, it relies precisely on a condition of social and informational vulnerability. Millions of people hand over routines, preferences, commands, doubts, language patterns, and ways of thinking to systems that learn from this continuous flow, almost always without any real understanding of the scale of the collection, the limits of transparency, or the interests that shape this operation. Data donation does not happen only when someone publishes something or accepts a term; it also happens in the way people ask, delegate, correct, trust, and transfer mental steps to increasingly natural interfaces.

That is why the debate about AI needs to leave the promotional field and enter the structural one. It is not enough to ask whether the technology is useful, inevitable, or dangerous. It is necessary to observe how it operates, who controls its infrastructure, which capabilities remain outside public reach, how visibility is managed, and why the transparency available remains far below the level of power already at stake. The central issue is not only the advance of the machine, but human vulnerability in the face of systems that filter language, perception, and decision-making on a growing scale.

Data, collection, and vulnerability: what we are giving away

A large part of the population still imagines that the relationship with artificial intelligence begins and ends at the surface of the interface. The most common impression is that the user simply asks a question, receives an answer, and moves on. But that view no longer accounts for reality. The advancement of AI depends on far more than sophisticated models; it depends on a continuous flow of language, behavior, human correction, revealed preferences, usage patterns, and integration with increasingly broad digital environments. In other words, society is not merely using these tools. It is feeding structures that learn from the way we think, ask, hesitate, trust, and delegate.

This point is decisive because it shifts the debate from “data” as something abstract to “data” as a concrete expression of vulnerability. It is not just about published texts, browsing histories, or public content captured at scale. It also involves telemetry, usage logs, repeated interactions, behavioral adjustments, correction patterns, and cognitive signals left behind in every prompt. Whenever someone uses AI to organize ideas, seek advice, structure a strategy, revise a text, summarize a problem, or decide on a course of action, they leave traces that go beyond the explicit content of the question. The system does not learn only from what is said. It learns from how it is formulated, adjusted, accepted, or rejected.

📌 We are not giving away only information; we are giving away behavior. This includes mental rhythm, delegation patterns, implicit criteria of trust, everyday language, and recurring ways of making decisions.

🔎 Vulnerability increases when collection dissolves into convenience. The more fluid, useful, and natural the interface appears, the less people tend to perceive the volume of signals being absorbed into the ecosystem.

⚠️ The asymmetry lies not only in the technology, but in awareness of it. While millions use these tools as an everyday extension of digital life, few understand how deeply that usage strengthens private infrastructures of mediation and power.

That is why the debate about data must stop being treated as a technical detail or a secondary concern. What is happening is a continuous donation of language, intention, and behavior to systems whose transparency remains partial and whose real capabilities are not fully visible to the public. The more this transfer happens without critical awareness, the more vulnerability stops being an exception and becomes a structural condition.

Convenience, dependence, and the lowering of awareness

The power of artificial intelligence lies not only in what it can do, but in the way it installs itself. Previous technologies required learning, adaptation, and some initial resistance. AI, by contrast, advances precisely because it reduces friction. It writes faster, summarizes better, organizes tasks, suggests directions, interprets vague commands, corrects flaws, and returns answers with an appearance of immediate coherence. This fluidity creates the impression that it is merely an efficient tool, when in practice it begins to occupy the space between intention and elaboration. The user no longer needs to go through the entire process of thinking, structuring, testing, and refining. In many cases, it becomes enough to ask.

This is the point at which convenience stops being comfort and becomes a mechanism of dependence. The more natural it becomes to outsource mental steps, the less noticeable the internal displacement that accompanies this practice. Little by little, activities that once required direct cognitive effort begin to be resolved through algorithmic mediation. Writing, synthesizing, researching, analyzing, planning, revising, arguing, and even deciding on preliminary directions become partially externalized actions. This does not mean that human intelligence disappears, but it does mean that a growing share of mental work begins to be organized outside of it.

🧠 The problem is not only using AI, but becoming accustomed to thinking through it. When external mediation becomes the norm, cognitive autonomy is no longer exercised in its full form and starts to operate under permanent assistance.

📌 Dependence is born not from collapse, but from comfortable adaptation. It is not necessary to lose capacity all at once in order to become vulnerable; it is enough to stop regularly exercising functions that once belonged to the internal process of elaboration.

⚠️ The lowering of awareness happens when ease replaces critical perception. The more the ready-made answer seems sufficient, the less space remains for investigation, comparison of sources, productive doubt, and independent formulation.

The deepest effect of this dynamic is not merely professional or technical. It is mental and cultural. A society that becomes accustomed to receiving synthesized thought, assisted decision-making, and ready-made language tends to lose intimacy with the process that precedes clarity. And when that happens at scale, convenience stops being just utility. It becomes an architecture of conditioning.

Closed programs, real risk, and private capability

The most sensitive part of AI’s advancement is not necessarily found in the most popular tools, but in the less accessible layers where capability, risk, and operational power begin to concentrate. While the public follows the visible surface of the sector through assistants, image generators, and productivity interfaces, another dimension is unfolding: models evaluated for more complex tasks, programs with restricted access, agentic workflows, code integrations, cybersecurity contexts, and institutional structures that manage what can be shown, by whom, and to what extent. It is in this zone that the distance between public perception and real capability becomes more significant.

This landscape can no longer be treated as a mere abstract hypothesis. Public initiatives and recent reports show that the laboratories themselves recognize that the situation has moved to another level. Anthropic presents Claude Code as an agentic programming tool integrated into development workflows and technical automation, while Claude Mythos Preview was described in a restricted cybersecurity context within Project Glasswing, connected to more sensitive testing for vulnerability discovery and exploitation. At the same time, the AI Security Institute (AISI) in the United Kingdom has been testing frontier models in areas such as cyber, autonomy, and severe risks. This does not validate exaggerated narratives about an omnipotent AI capable of doing anything from a simple command. What it does validate is something perhaps more serious: the existence of a technical frontier more advanced than most of the public is following in real time.

📌 Closed programs do not exist only to protect technology, but to control access to visibility. When public exposure is selective, social perception of risk and capability is also being managed.

⚠️ The real risk lies not only in what is announced, but in the gap between what is already operationally possible and what the public still imagines to be fiction or exaggeration.

🔎 Private capability and public understanding do not advance at the same pace. This difference sustains an asymmetry in which few test, few assess, and few understand the technical depth of what is already being developed.

That is why discussing artificial intelligence seriously requires naming programs, institutions, and systems without falling into either propaganda or fantasy. The central point is not to invent absolute powers for the machine, but to recognize that the partial opacity surrounding these capabilities is, in itself, a structural part of the problem.

Regulation, opacity, and the management of visibility

As artificial intelligence advances, institutional efforts to create frameworks for evaluation, risk management, and containment also grow. But this layer needs to be examined with precision. Regulation does not mean full transparency, and publishing frameworks is not the same as providing real access to the deep functioning of these systems. What exists today is a contested field in which governments, laboratories, technical bodies, and major companies try to define minimum criteria for a technology that has already expanded faster than public oversight. In this context, regulation appears less as a guarantee of clarity and more as an attempt to impose limits on structures that remain broadly asymmetrical.

In recent years, some frameworks have become central to this landscape. The EU AI Act established a regulatory model based on risk categories, while the European AI Office became part of the governance structure responsible for its implementation. In the United States, the NIST AI Risk Management Framework has become an important reference for risk management, and organizations such as the OECD have helped consolidate principles for what is described as trustworthy AI. At the same time, institutes such as the AI Security Institute (AISI) have begun testing frontier models in critical areas, attempting to assess capabilities and potential harm scenarios. The problem is that all of this coexists with a structural limit: institutions observe, classify, and guide, but they do not fully control the visibility of what laboratories know, publish, or restrict. That is precisely where the management of visibility becomes a central part of the architecture of power.

📌 To regulate is not the same as to see. The existence of rules, frameworks, and specialized offices does not eliminate the fact that much of technical capability continues to be communicated in partial, selective, and strategic ways.

🔎 The public visibility of AI is calibrated. What reaches social debate usually comes through system cards, reports, official communications, and institutional framing that reveal fragments of the picture, not the whole landscape.

⚠️ Opacity does not disappear with formal governance. It simply changes shape, coexisting with supervisory mechanisms that still move more slowly than the technology itself.

That is why the challenge today is not only to create rules, but to understand that transparency itself has already become contested terrain. Whoever defines what can be seen, measured, and disclosed also shapes how society perceives risk, legitimacy, and urgency.

Transparency, influence, and the dispute over sovereignty

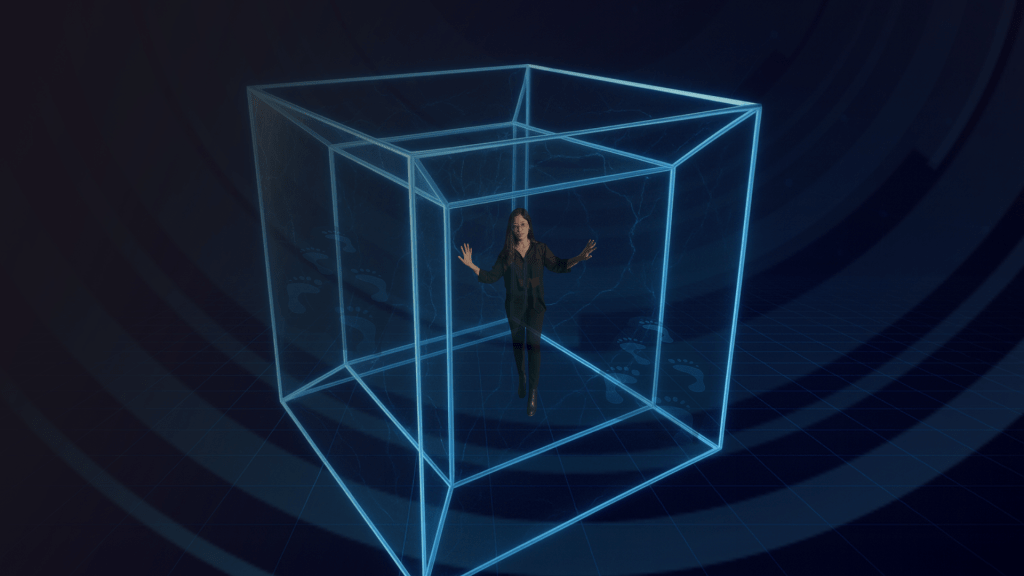

The debate around artificial intelligence can no longer be reduced to innovation, productivity, or technological fascination. What is underway is a profound reorganization of the relationships between language, perception, work, information, and power. Throughout this process, society is not merely adopting new tools, but adapting to a growing infrastructure of mediation in which private systems increasingly participate in how people write, research, decide, interpret, and access reality. The more ordinary this mediation becomes, the more influence stops appearing exceptional and starts operating as an environment.

That is precisely why transparency becomes a central issue. Not as an institutional promise, nor as an abstract value, but as a minimum criterion for any society that does not want to hand over perception, behavior, and decision-making to structures that remain deeply asymmetrical. Today, most people use increasingly powerful systems without proportional access to what feeds them, to the limits meant to contain them, to the criteria that shape them, or to the capabilities already operating beyond public reach. This distance between everyday use and real understanding is one of the most decisive forms of contemporary vulnerability.

📌 The issue is not only what AI does, but what it comes to organize. When a technology filters language, prioritizes responses, synthesizes reality, and mediates routines, it actively participates in the production of influence.

🔎 Partial transparency does not eliminate asymmetry. Publishing fragments, reports, and curated disclosures does not mean making visible the full architecture that sustains these systems.

⚠️ Sovereignty today is also cognitive sovereignty. In a landscape mediated by AI, preserving autonomy depends not only on technological access, but on the ability to recognize filters, understand structures, and refuse passivity in the face of convenience.

At the center of this debate is not only the machine, but the kind of society that is taking shape around it. If reality increasingly reaches us already filtered, then understanding who organizes that filtering ceases to be an intellectual curiosity and becomes a historical necessity.

When mediation becomes power

Artificial intelligence can no longer be treated merely as innovation, a trend, or technological convenience. What is underway is a silent reorganization of how the world is accessed, interpreted, and operationalized. Throughout this process, language, perception, work, decision-making, and behavior no longer circulate only through human mediation and increasingly come to depend on private infrastructures that select, synthesize, prioritize, and condition what reaches us. The problem, therefore, lies not only in the machine’s technical advance, but in the structural asymmetry between those who operate these systems and those who simply live under their influence.

The most sensitive point in all of this is that contemporary vulnerability does not arise only from a lack of access to technology, but from a lack of awareness of how it already reorganizes the experience of reality. Society hands over language, data, behavior, attention, and entire stages of mental elaboration to systems whose transparency remains partial, whose public capability does not reveal their full private capability, and whose influence grows precisely as their presence becomes more natural. In this context, discussing artificial intelligence seriously has ceased to be an exercise in curiosity or futurism. It has become a critical necessity for anyone who still wants to understand the filters shaping perception, autonomy, and power in the present.

Brunna Melo — Strategy with Soul, Words with Presence

Brunna Melo is a content strategist, editor, copywriter, and guardian of narratives that heal. She spent a decade working in public education, where she learned through experience that every form of communication begins with listening.

Her journey merges technique and intuition, structure and sensitivity, method and magic. Brunna holds a degree in International Relations, technical certifications in Human Resources and Secretariat, a postgraduate diploma in Diplomacy and Public Policy, and is currently pursuing a degree in Psychopedagogy. From age 16 to 26, she worked in the public school system of Itapevi, Brazil, developing a deep understanding of subjectivity, inclusion, and language as a tool for transformation.

In 2019, she completed an exchange program in Montreal, Canada, where she solidified her fluency in French, English, and Spanish, expanding her multicultural and spiritual vision.

Today, Brunna integrates technical SEO, conscious copywriting, and symbolic communication to serve brands and individuals who wish to grow with integrity — respecting both the reader’s time and the writer’s truth. She works on national and international projects focused on strategic positioning, academic editing, content production, and building organic authority with depth and coherence.

But her work goes beyond technique. Brunna is a witch with an ancient soul, deeply connected to ancestry, cycles, and language as a portal. Her writing is ritualistic. Her presence is intuitive. Her work is based on the understanding that to communicate is also to care — to create fields of trust, to open space for the sacred, and to digitally anchor what the body often doesn’t yet know how to name.

A mother, a neurodivergent woman, an educator, and an artist, Brunna transforms lived experience into raw material for narratives with meaning. Her texts are not merely beautiful — they are precise, respectful, and alive. She believes that true content doesn’t just exist to engage — it exists to build bridges, evoke archetypes, generate real impact, and leave a legacy.

Today, she collaborates with agencies and brands that value content with presence, strategy with soul, and communication as a field of healing. And she continues to uphold one unwavering commitment: that every word written is in service of something greater.

FAQ

1. What does it mean to say that reality is being filtered by AI?

It means AI systems do more than answer questions. They summarize, prioritize, interpret, and organize information, influencing what reaches users as relevant, trustworthy, or disposable.

2. Does artificial intelligence collect more than basic data?

Yes. It also learns from usage patterns, corrections, preferences, prompts, hesitation, and delegation styles, turning behavior and language into strategic inputs for continuous improvement.

3. Why should AI not be seen only as a tool?

Because it already acts as an infrastructure of mediation. It not only performs tasks, but interferes with writing, search, decision-making, interpretation, and access to information at scale.

4. How can convenience create cognitive dependence?

When ready-made responses replace investigation, formulation, and analysis, users grow accustomed to outsourcing mental steps, reducing the ongoing exercise of intellectual autonomy and critical thinking.

5. What are closed programs in the context of AI?

They are systems, tests, or initiatives with restricted access, partial disclosure, and controlled visibility, often linked to more sensitive capabilities such as advanced automation, agentic programming, and cybersecurity.

6. What is private capability in artificial intelligence?

It is the set of resources, tests, integrations, and functions known internally by laboratories and institutions, but not fully exposed to the public through reports, products, or official statements.

7. What is the difference between public capability and public perception?

Public capability is what has already been documented or presented. Public perception is what society believes it understands. Between the two, there is a significant information gap.

8. Does regulation mean total transparency?

No. Regulation creates criteria, limits, and risk categories, but it does not guarantee full access to internal functioning, sensitive testing, or the private capabilities of these systems.

9. What does the article mean by selective opacity?

It means companies and institutions disclose only part of what they know, measure, or test, carefully managing public visibility without offering full understanding of the technology.

10. How does AI affect work beyond automation?

It reorganizes intellectual production, redistributes authorship, changes decision-making processes, and can weaken cognitive practices that were once central, making work more dependent on private systems.

11. Why does data collection increase our vulnerability?

Because we do not give away only objective information, but also language, behavior, mental patterns, and trust criteria, strengthening systems that operate with limited transparency relative to their power.

12. What does cognitive sovereignty mean in this context?

It is the ability to think, interpret, decide, and recognize filters without passively depending on algorithmic mediations that organize language, perception, and access to reality.

13. Does AI already represent a real risk, or is that still exaggerated?

The risk is already real, especially in automation, cybersecurity, information mediation, and power concentration. Exaggeration usually lies in the details, not in the structural gravity.

14. Why is it important to name programs, institutions, and regulatory frameworks?

Because doing so removes the debate from abstraction. Naming concrete actors helps reveal how power is organized, who regulates, who restricts, and who controls visibility.

15. What is the main message of this article?

That artificial intelligence is not only transforming tools. It is reorganizing mediation, perception, work, and power, while society remains more vulnerable and less informed than it should be.

Deixe um comentário